The Human Residual

What remains when everything automatable is automated

Every technological transition follows the same sequence. First, it replaces the most repetitive tasks. Then, it absorbs processes that once required training, experience, or even judgment. At each stage, the reaction is identical: disbelief, then resistance, then adaptation. And at each stage, something remains that the new system cannot fully absorb.

I’ve been thinking about this pattern a lot over the past year, as generative AI has moved from novelty to infrastructure. The question I keep coming back to is not whether AI will displace work. It obviously will, and it already is. The question is what remains after the displacement, and whether that remainder is something people can deliberately cultivate.

I call it the human residual.

What the Residual Is Not

The instinct is to define it in comforting terms: creativity, empathy, intelligence. These words appear in every report on the future of work, repeated so often that they have lost analytical precision. The problem is that they are insufficiently specific to be useful as a framework for action.

The deeper residual is a condition, not a trait. Specifically, it is the ability to operate without complete information and still produce outcomes that matter.

Machines, even very good ones, need specified parameters. They optimize within limits set by others. The human residual begins exactly where those limits end: where the problem is not yet fully formulated, where the variables are not yet identified, where the appropriate framework has not yet been chosen.

This is a form of processing that integrates experience, context, contradiction, and risk tolerance simultaneously. It resists decomposition. And because it resists decomposition, it resists automation.

I think a useful analogy here is the difference between a GPS navigation system and an experienced local driver. The GPS is better at calculating routes. It has more data, it updates in real time, and it never gets tired. But drop both of them in an unfamiliar city where the maps are wrong, the road signs are in a language neither understands, and a political protest is blocking three intersections. The experienced driver will outperform the GPS. Not because the driver is faster at computation, but because the driver can operate in a space where the parameters themselves are unknown.

That is the residual.

The Scale of What Is Being Displaced

To understand why this matters now, it helps to look at the numbers.

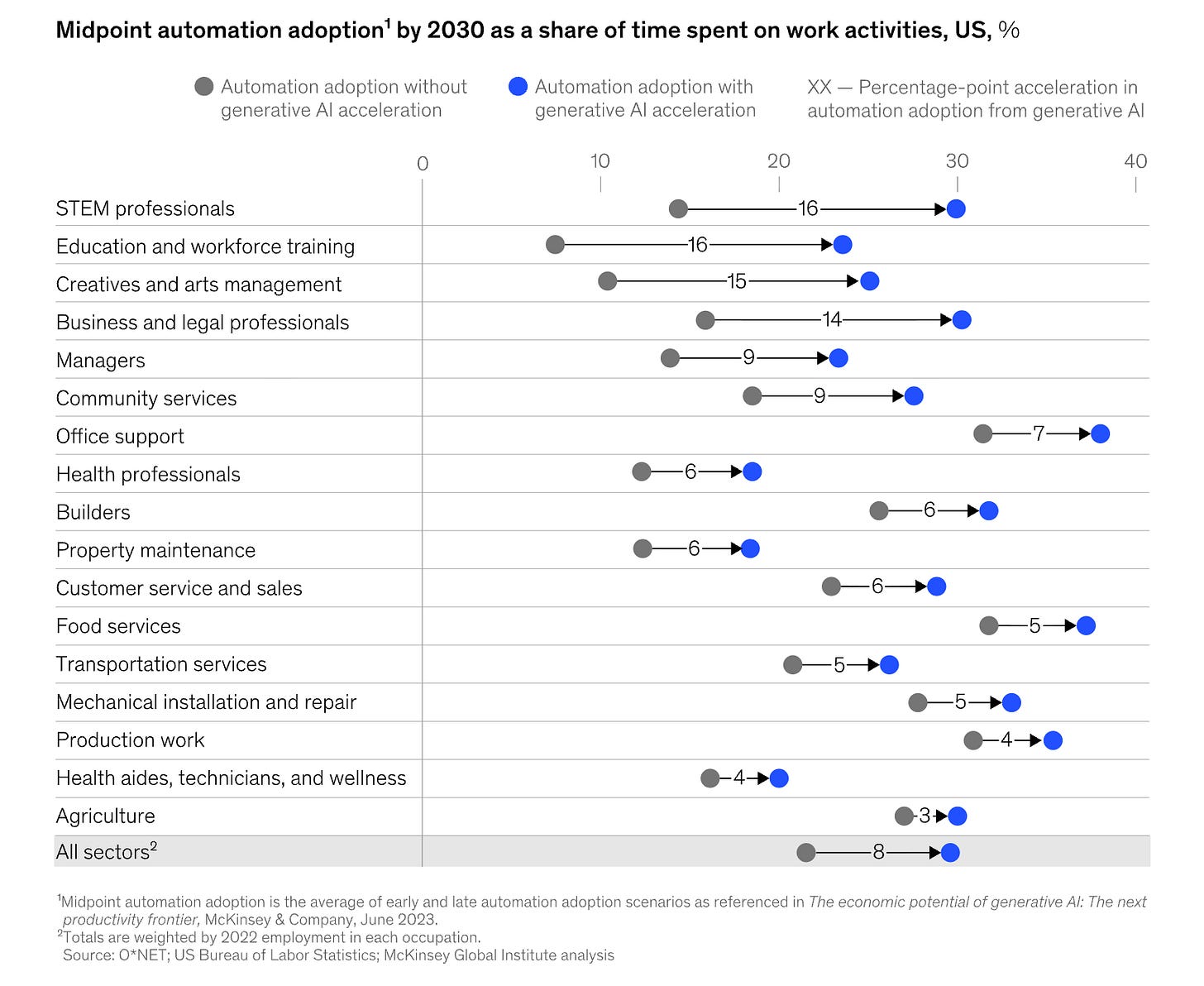

McKinsey estimates that by 2030, generative AI will accelerate automation adoption across all US sectors by an average of 8 percentage points. That is the difference between roughly 22% and 30% of all work hours carrying automation potential. But the aggregate number obscures the real story: the acceleration is not uniform, and where it hits hardest is counterintuitive.

The biggest jumps are not in manual labor or factory work. They are in STEM professions (+16 percentage points), education and workforce training (+16), creative and arts management (+15), and business and legal professionals (+14). Meanwhile, sectors like agriculture (+3), production work (+4), and health aides (+4) see relatively modest increases. Office support, which was already the most automatable category before generative AI, adds only another 7 percentage points, as it was already near the ceiling.

This is, in a real sense, unprecedented. For the first time, the automation wave is moving up the income and education ladder rather than across the factory floor. McKinsey calls this “reverse skill bias”: generative AI disproportionately impacts higher-educated knowledge workers because its core capability is processing natural language, which is the foundation of most white-collar work.

The World Economic Forum’s Future of Jobs Report 2025 adds another dimension. They estimate that 39% of existing skill sets will become outdated or transformed between 2025 and 2030, and project that AI could help generate 170 million new jobs globally by 2030 while displacing roughly 92 million, for a net gain of about 78 million positions. PwC’s 2025 Global AI Jobs Barometer reinforces the picture from a different angle: skill demands are changing 66% faster in AI-exposed occupations than in the least exposed roles, up from 25% the previous year.

These are not small numbers. But they tell us what is being displaced, not what endures. The residual is what endures.

Because those 78 million net new jobs are not the same as the old ones. They cluster disproportionately around roles that require the kind of unstructured judgment, cross-domain synthesis, and consequential decision-making I have been describing.

The Compounding Problem

Consider the trajectory most professionals follow: skill acquisition, certification, specialization, optimization. Every step in this sequence builds capabilities that, by definition, are encodable. If a competency can be taught systematically, it can eventually be performed systematically by something other than a human.

The investment compounds, but it compounds in a category with a declining half-life.

This is already measurable. Studies from the Stanford AI Index 2025 show productivity gains of roughly 14-15% in structured knowledge work environments, such as call centers, with the biggest boost going to less experienced employees. In other words, AI compresses the gap between a junior worker and a senior one in tasks that can be proceduralized. GitHub Copilot users complete coding tasks 56% faster than non-users. Customer service chatbots now resolve 70% of inquiries without human involvement.

That is precisely the kind of skill accumulation that most career paths are built around. Years of training to get faster, more accurate, and more efficient at defined tasks. AI is quietly eating into the return on that investment.

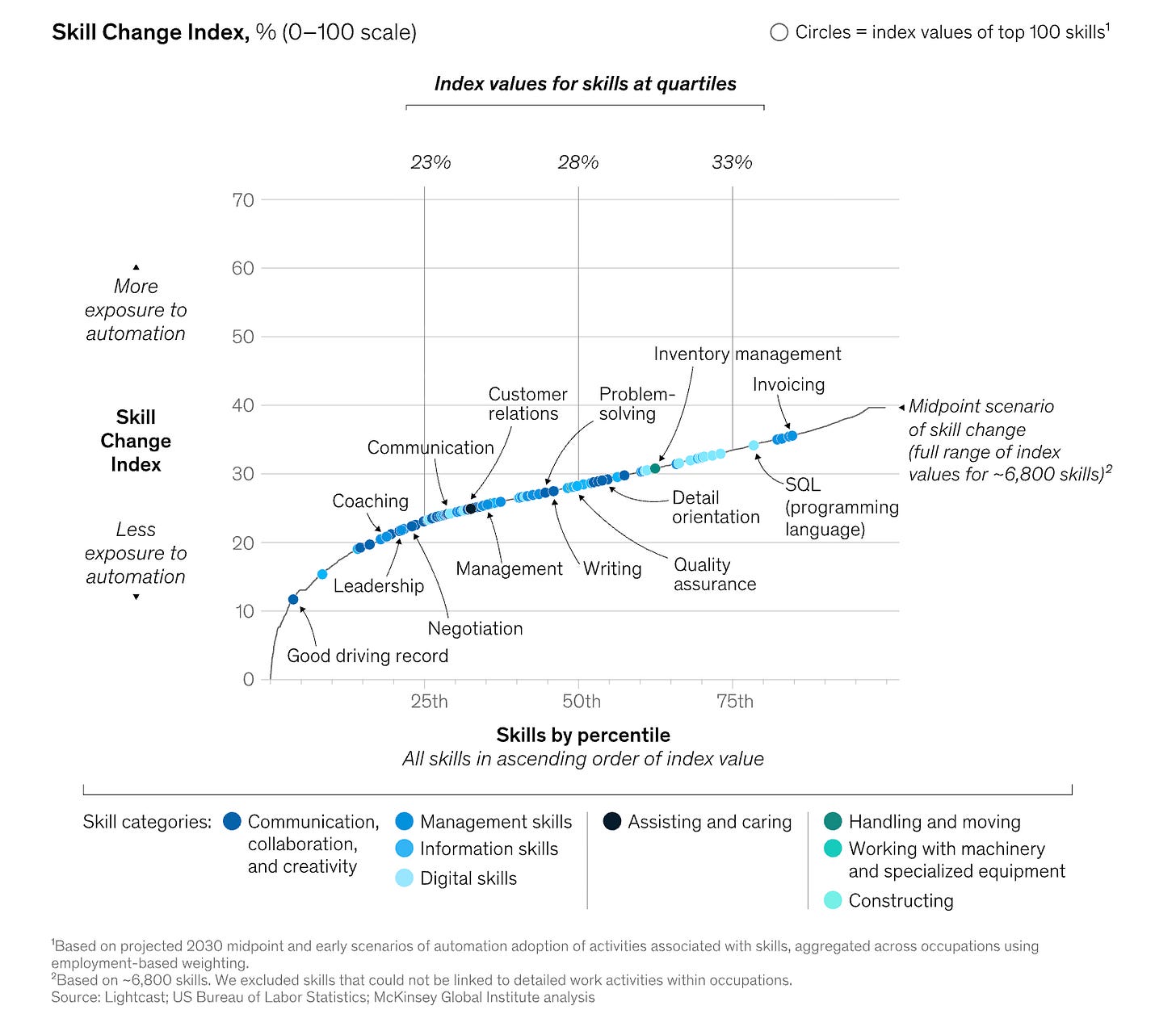

McKinsey’s Skill Change Index makes this visible. They mapped roughly 6,800 skills by their exposure to automation, and the pattern is striking. At the top of the exposure curve are digital skills, information-processing skills, and technical capabilities such as SQL and invoicing. At the bottom, with the least exposure, sit skills like leadership, negotiation, coaching, and management. Writing and communication land somewhere in the middle, which makes sense: the mechanical act of writing is highly automatable, but the judgment about what to communicate, to whom, and why is not.

I see a parallel here with a pattern in financial markets. You can compound capital by being good at something that many people are good at, or by being good at something that very few people are good at. The first strategy works until competition drives the returns to zero. The second strategy works for much longer, because the skill is harder to replicate. Career capital works the same way.

Now consider the alternative trajectory: ambiguity exposure, consequential decision-making, pattern recognition across domains, judgment under complexity.

This second path does not appear on any curriculum because it cannot be transmitted through instruction. It evolves through accumulated experience, relying on learning that occurs only when outcomes are genuine and can be reversed only with effort.

The difference between the two trajectories lies in building what can be replaced and developing what cannot.

Where the Value Migrates

Organizations face the same structural question. As automation absorbs more of what was previously considered knowledge work, the composition of value shifts.

What becomes less significant: encodable processes, systematized knowledge, repeatable execution.

What grows in importance: the capacity to navigate situations without precedent, make commitments under uncertainty, and maintain coherence when conditions evolve faster than plans can be updated.

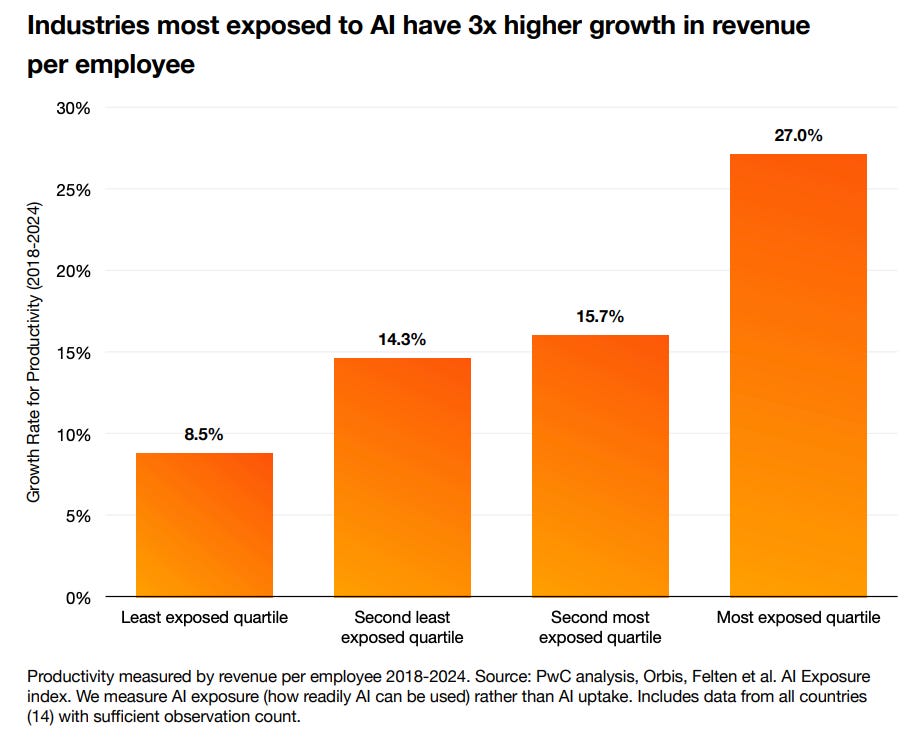

We are already seeing this in the data. PwC’s 2025 Global AI Jobs Barometer measured productivity growth (revenue per employee, 2018-2024) across industries grouped by their level of AI exposure. The results show a clear gradient: industries in the least exposed quartile saw 8.5% cumulative productivity growth, while those in the most exposed quartile saw 27.0%. That is a more than 3x difference, and it has accelerated sharply since 2022.

Workers with AI skills now command a wage premium of 56% over colleagues in the same role without those skills, more than double the 25% premium recorded just one year prior. Jobs requiring AI skills grew 7.5% year-over-year, even as total job postings fell 11.3%.

This tells me something important: the value is not accruing to people who compete with the machines. It is accruing to people who can do what the machines surface but cannot resolve.

The organizations that will compound advantages over time are not necessarily those with the most advanced automation. Automation, by its nature, becomes a commodity. Gartner projects that 72% of enterprises plan to deploy AI agents or copilots by 2026. When nearly everyone has the same tools, the tools themselves cease to be a differentiator. It is analogous to how, in the early days of the internet, simply having a website was a competitive advantage. Within a few years, it was table stakes, and the advantage shifted to what you did with it.

The durable advantage accrues instead to those who develop the highest concentration of human residual: people whose judgment improves with complexity rather than deteriorating under it.

The Uncomfortable Symmetry

The same technological progress that makes certain human contributions obsolete also makes the remaining human contributions more valuable. The residual does not just survive automation. It appreciates it. This is an important structural point, not a feel-good platitude.

When a machine can draft a legal brief in seconds, the value of knowing which legal strategy to pursue in a novel situation goes up. When an AI model can generate a financial projection from any dataset, the value of knowing which questions to ask about the assumptions behind that dataset goes up. The tool compresses the execution layer and, in doing so, expands the premium on the judgment layer.

Go back to the McKinsey Skill Change Index. The skills at the bottom of the exposure curve, leadership, negotiation, and coaching, are not there because they are unimportant. They are there because they require something that resists encoding: the ability to read a room, weigh competing interests, make a call when the data is insufficient, and take responsibility for the outcome. Those skills sit at the bottom of the automation exposure chart precisely because they operate in the domain of irreducible human judgment.

And as everything above them on that chart gets automated, the relative value of those bottom-of-the-chart skills increases. Not because they change, but because the context around them changes. When procedural output becomes abundant, the things that cannot be proceduralized become scarce by comparison.

As AI capabilities improve, the gap between what can be automated and what cannot will become sharper. The residual will continue to appreciate because everything around it is becoming more abundant.

Implications

So the question is whether you are developing the part that cannot be encoded: the part that becomes more relevant as everything around it becomes more efficient.

I do not think this is a cause for panic. But I do think it is cause for an honest reassessment of what constitutes valuable professional development. The conventional approach of stacking more certifications, more specializations, and more technical proficiencies is not wrong, but its returns are diminishing faster than most people realize. Meanwhile, the less legible skills, the ones developed through exposure to ambiguity, consequential decisions, and genuine accountability, are compounding in value.

Growth, understood correctly, is not accumulation. It is the progressive development of what remains when everything removable has been removed.